In March 2026, the publishing world found itself confronting one of its most unsettling questions yet: what happens when the boundary between human creativity and machine-generated content becomes indistinct? The controversy surrounding Shy Girl, a horror novel by Mia Ballard, brought this question into sharp and uncomfortable focus. Originally published in the United Kingdom in late 2025, the book was scheduled for a wider release in the United States through Hachette Book Group. That release was abruptly halted. The publisher not only withdrew the American edition but also discontinued the UK version, a decision that signalled far more than routine editorial reconsideration. It marked a moment of deep institutional anxiety about authorship, authenticity and the future of literary production.

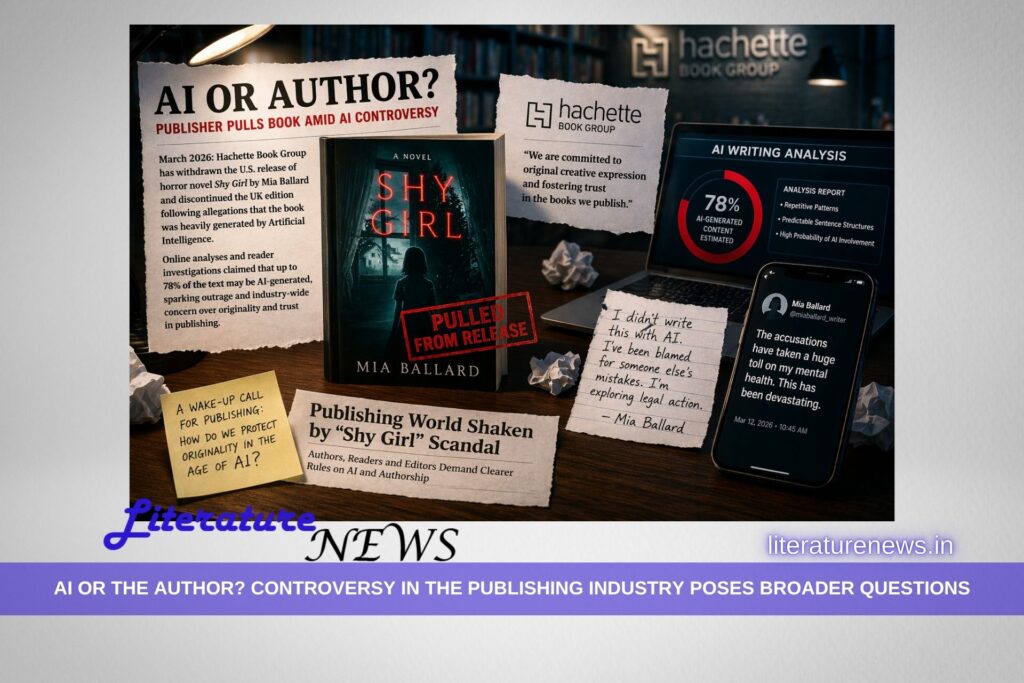

The immediate trigger for this withdrawal was a wave of online scrutiny that questioned the originality of the text. Analysts and readers began to examine the novel closely, with some claiming that a significant portion of the work bore the hallmarks of AI-generated writing. One widely circulated estimate suggested that as much as 78 per cent of the text could have been produced with the assistance of artificial intelligence. Whether or not such figures can be verified with precision is almost beside the point. What matters is that the perception of machine authorship was strong enough to compel one of the world’s largest publishing houses to take decisive action.

Hachette’s public response was carefully worded but unmistakably firm. The company reiterated its commitment to “original creative expression,” a phrase that now carries new weight in an industry increasingly shaped by technological intervention. The withdrawal of Shy Girl was not framed as a punitive measure against an individual author, but as a defence of a principle. Yet beneath this measured language lies a deeper uncertainty. What exactly constitutes originality in an era where writers may use AI tools for drafting, editing, or ideation? And where should publishers draw the line between assistance and authorship?

Mia Ballard, for her part, strongly denied that the novel was primarily generated by artificial intelligence. She attributed the controversy to earlier versions of the manuscript, suggesting that an editor associated with a self-published edition may have introduced AI-generated material without her full awareness. Ballard also indicated that she was considering legal action, arguing that the allegations had damaged her reputation and professional standing. Beyond the legal and professional dimensions, there were also human consequences. Reports suggested that the intense scrutiny and public criticism had taken a toll on her mental health, highlighting the personal cost of a controversy that quickly escalated beyond the confines of literary debate.

The Shy Girl episode has unsettled the publishing industry not simply because of the specifics of the case, but because of what it reveals about the fragility of existing frameworks. For decades, publishing has relied on a relatively stable understanding of authorship. A book was assumed to be the product of a human mind, shaped through imagination, labour and revision. AI disrupts that assumption at its core. It introduces the possibility that a text may be partially or substantially generated by systems trained on vast corpora of existing literature, raising questions about originality, ownership and ethical responsibility.

What has sent what many insiders describe as a “cold shiver” through the industry is the realisation that detection itself is uncertain. Unlike plagiarism, which can often be identified through direct textual comparison, AI-generated content does not necessarily replicate existing passages. It produces new combinations, making it far more difficult to prove or disprove machine involvement. This ambiguity places publishers in a precarious position. Act too late, and they risk reputational damage. Act too quickly, and they risk penalising authors without conclusive evidence.

The controversy has also exposed a widening gap between technological capability and institutional preparedness. Writers are already experimenting with AI tools in various ways, from brainstorming ideas to refining prose. Some view these tools as extensions of the creative process, analogous to earlier technological shifts such as word processors or digital research databases. Others see them as fundamentally different, arguing that AI introduces an external agency into what has traditionally been an intensely personal act of creation. The industry has yet to arrive at a consensus, and the Shy Girl case demonstrates how urgently such a consensus is needed.

From an editorial standpoint, the incident raises uncomfortable but necessary questions. Should publishers require full disclosure of AI usage in manuscripts? If so, how detailed should that disclosure be? Is there a threshold beyond which a work ceases to be considered authentically human? And perhaps most critically, how can these standards be enforced in a way that is both fair and transparent? At present, there are few clear answers. What exists instead is a growing recognition that the rules governing literary production are being rewritten in real time.

There is also a cultural dimension to this debate that cannot be ignored. Literature has long been valued not only for its aesthetic qualities but for its human origins. Readers engage with books as expressions of individual consciousness, shaped by lived experience and emotional depth. The suspicion that a work may be largely machine-generated disrupts that relationship. It introduces a layer of doubt that affects not only the text itself but the reader’s trust in the publishing ecosystem as a whole.

At the same time, it would be simplistic to frame the issue as a binary conflict between human and machine. The reality is more complex. AI tools are already embedded in many aspects of writing and publishing, from grammar checking to market analysis. The challenge lies in distinguishing between augmentation and substitution, between tools that support creativity and systems that replace it. The Shy Girl controversy forces the industry to confront this distinction with a level of urgency it can no longer defer.

In many ways, this incident may prove to be a turning point. It has compelled publishers, authors and readers to engage with questions that were previously theoretical, and perhaps never thought of in the board meetings. It has exposed vulnerabilities in editorial processes and highlighted the need for clearer ethical guidelines. Most importantly, it has underscored the value of transparency. In an environment where the origins of a text can no longer be taken for granted, openness about the creative process may become as important as the work itself.

Also, let me pose an even broader question. Why are publishers fearing AI-generated text? If the ultimate goal of reading is extracting pleasure and feeling relieved, why should an issue be made of the origin of the text? As far as the text is providing us with reading pleasure, I would refrain from getting into the depths of the genesis. Yes, the opinions may vary, and serious readers (as well as critics) may pose even serious questions. However, it needs to be debated with openness in mind rather than cancelling the AI-text.

For now, the fate of Shy Girl remains a cautionary tale. It is a reminder that the publishing industry is entering a period of profound transformation, one in which the definition of authorship itself is under negotiation. The anxiety it has generated is not merely about one book or one author. It is about the future of literature in an age where the line between human imagination and artificial generation is becoming increasingly difficult to discern.

Ashish for Literature News